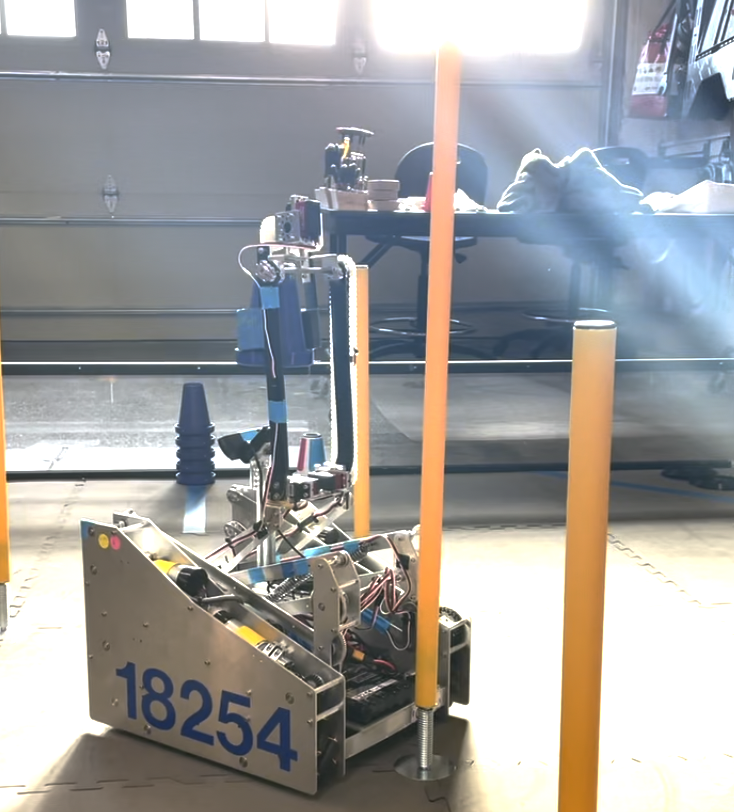

Implemented a real-time vision localization stack for autonomous robots using blob/contour extraction, color landmark detection, and heading correction in a closed-loop navigation pipeline.

Project Overview

The system processes live camera frames to identify landmarks, estimate robot pose, and issue steering corrections at runtime. The architecture was designed for low-latency localization in constrained environments where GPS is unavailable.

Key Features

- Feature Extraction: OpenCV blob/contour detection with noise filtering for stable target tracking.

- Landmark Disambiguation: Color-based segmentation to separate landmarks and reduce ID swaps.

- Pose Correction: Real-time heading adjustment from visual offsets and angular error estimates.

- Control Integration: Localization outputs feed directly into navigation logic for closed-loop updates.

Technical Details

The pipeline uses thresholding and morphology to isolate candidate regions, then computes centroids and orientation signals for control feedback. Localization and correction execute per frame, allowing continuous trajectory updates without external positioning infrastructure.